Why the industry’s existing frameworks weren’t enough - and what we had to build from scratch

The first wave of AI was about assistance. Autocomplete, summaries, drafts. AI working with a human to do things faster.

The second wave is different. It’s about representation.

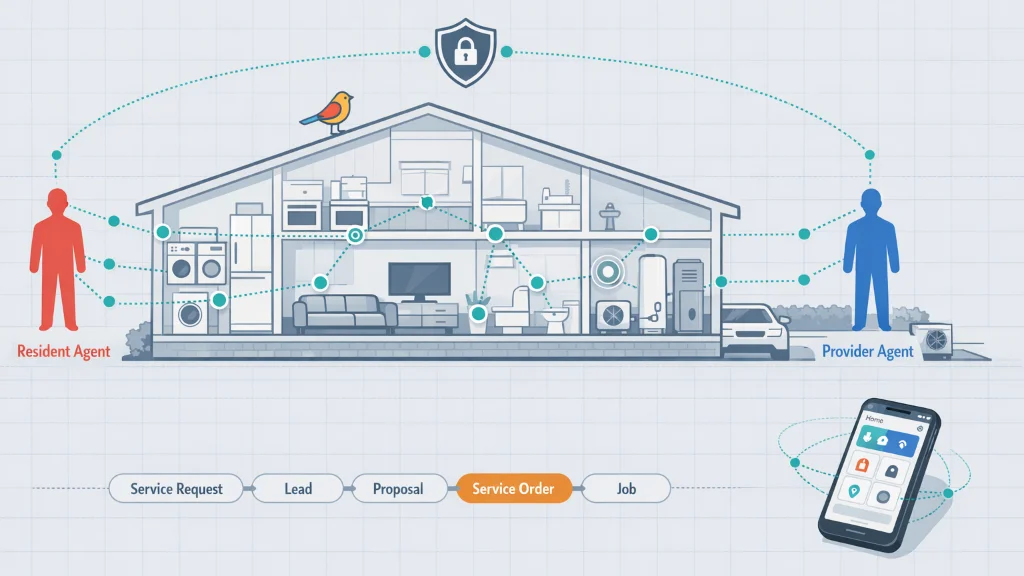

Your AI won’t just help you write a message to a contractor. It will ask clarifying questions on your behalf, engage with another agent on the other side, and converge on an outcome - before you ever have to intervene. At Spanr, that future isn’t a roadmap item. It’s what we’re building today: two autonomous agents, one representing a resident and one representing a service provider, communicating directly to resolve ambiguity and align on a quote.

But this kind of system breaks every assumption embedded in today’s agent tooling. And building it responsibly required us to go back to first principles and design infrastructure the industry hasn’t built yet.

The Problem We Were Actually Solving

Home services have a structural information problem. When a resident calls about a failing HVAC system, they don’t have technical vocabulary. When a provider receives that call, they don’t have enough context to quote accurately. The result: wasted site visits, padded estimates, frustrated customers, and stalled jobs.

We believed agents could bridge this gap. A resident-side agent that can ask smart diagnostic questions. A provider-side agent that can interpret the answers and surface an accurate scope of work. Two agents converging so humans can act on aligned information rather than guesswork.

The problem is straightforward to describe. The system discipline required to build it safely is not.

Why Every Existing Framework Failed Us

Before writing a single line of production code, we surveyed every category of agent technology available. Each had genuine strengths. Each had a structural mismatch for what we needed.

Academic Negotiation Frameworks (GENIUS, NegMAS, ANAC)

These are sophisticated, technically rigorous environments built for true agent-to-agent negotiation. They support bilateral utility functions, offer/counteroffer protocols, and formal convergence analysis. They are genuinely impressive.

But they were built for simulated environments with rational actors and well-defined issue spaces. They optimize for strategic optimality. Spanr’s environment is messy: semi-structured data, photos, maintenance histories, uncertain scope, real liability. Academic systems solve a research problem. We needed to solve a production governance problem. These are different problems.

General Multi-Agent LLM Frameworks (AutoGen, LangGraph, CrewAI)

These frameworks make it easy to wire together agents that can reason, debate, and decompose tasks. For demos and prototypes, they’re excellent.

But they are fundamentally Prompt-Heavy, State-Light. Governance is enforced through instructions inside a prompt - a polite suggestion to an LLM - rather than at the runtime level. There is no structural guarantee that an agent follows its constraints; there is only a probabilistic hope that it will. They don’t enforce immutable negotiation state. They don’t guarantee deterministic failure modes. They don’t define what convergence means, or when a session must terminate rather than continue. They don’t bound what an agent is authorized to commit to.

For agents discussing money, scope, and liability on behalf of real people, probabilistic compliance is not enough. You need infrastructure that makes certain behaviors structurally impossible, not just unlikely.

Enterprise Infrastructure (Google MCP and similar)

Standards like the Model Context Protocol represent important progress in agent interoperability. They standardize message schemas, tool discovery, and capability exposure - the plumbing of multi-agent systems.

But MCP defines transport. Spanr needed economic governance. These are different layers of the stack. Critically, MCP has no Session-Layer Security model for economic commitment - it has no concept of what an agent is authorized to agree to, no mechanism for bounding negotiation authority, and no way to reconstruct a decision chain months later when a dispute arises. It can tell you a message was delivered. It cannot tell you whether the agent that sent it had any right to make that commitment. Communication infrastructure and accountability infrastructure are not the same thing.

The Core Gap: Accountability in Economic Systems

The pattern across all three categories is the same. These frameworks assume human oversight, low-risk domains, experimental contexts, and short-lived sessions with no downstream liability.

They make agents capable. They do not make agents accountable.

That is the missing layer - and it’s the layer Spanr had to design from scratch.

What We Actually Built: The Engagement Flow

Before describing our constraints, it’s worth grounding the architecture in what actually happens during a Spanr session.

The flow below shows the full lifecycle of a homeowner engaging a service provider through Spanr - and where agents are doing the work:

The Spanr Engagement Protocol: A deterministic state machine governing the transition from initial diagnostic (Dr. Spanr) to an immutable Work Order.

The journey begins when a homeowner has a Dr. Spanr Conversation — a structured diagnostic exchange that captures the problem and produces a Service Brief: a machine-readable summary of the issue, context, and scope. That brief becomes the resident agent’s source of truth.

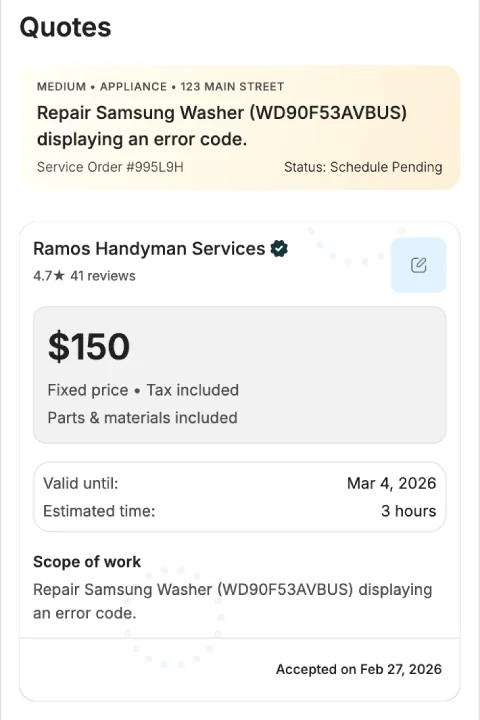

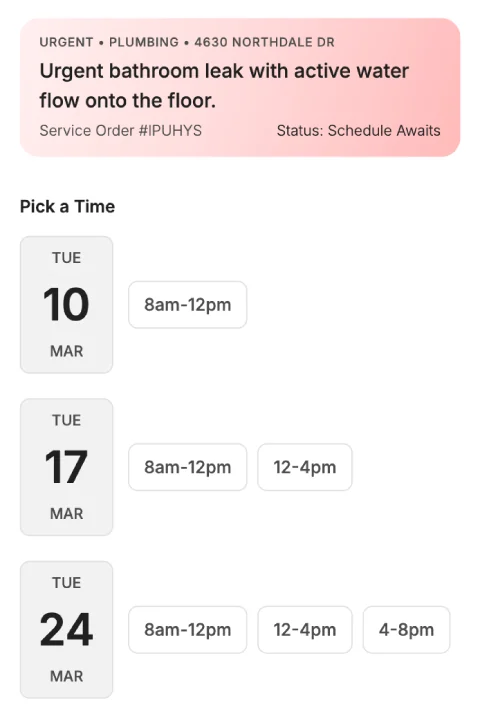

The Agent Compression Effect. A live service order showing Actor-Validated Transitions in action. Each milestone — Request, Acceptance, Quote, Schedule — is an immutable state transition with a timestamp. Notice that Quote Received, Quote Accepted, and Schedule Proposed all occurred on the same day — February 27 — even though the original Service Request had been sitting for nine days. The “Schedule Awaits” status demonstrates Risk-Tiered Autonomy: the agents proposed the slots, but the system halts for explicit human confirmation.

When the homeowner submits a Service Request, it is surfaced to providers as a Lead. Notice the vocabulary shift—and notice that it isn’t cosmetic. In Spanr, these aren’t just names; they are permissions. In our state machine, permissions are non-malleable. We don’t check if a user is “authorized” in the UI; we define the transition logic so that a Submit Proposal action is physically unaddressable by a Homeowner identity.

Because our architecture uses Actor-Validated Transitions, a Provider literally cannot “Accept” a Service Request the way a Resident can—they can only “Accept a Lead” to begin a negotiation. A Service Request is a resident’s call for help. A Lead is a provider’s business opportunity. The structural separation exists at the state machine level, preventing unauthorized actions before a single line of business logic even runs.

What follows is the critical phase: 2-way follow-ups via Dr. Spanr, where the resident agent and provider agent exchange clarifications, surface ambiguities, and progressively reduce uncertainty about scope and requirements. Neither agent is guessing. Both are operating within defined session constraints.

Once the agents have converged, the provider submits a Proposal. When the homeowner accepts, it doesn’t just create a Work Order - it creates a Service Order: a Contract of Record. At that exact moment, our architecture takes a Policy Snapshot, capturing every user preference and trade compliance rule in effect at the time of commitment. If a dispute arises six months later - a disagreement over a $750 repair, a question about what was authorized - Spanr can reconstruct the exact policy state at the moment of acceptance. Not a chat log. Defensible infrastructure.

A

Service Order Contract. Once the homeowner accepts this $150 fixed-price proposal, the system captures a Policy Snapshot, ensuring the scope and pricing are immutable and auditable for the duration of the job.

The agents then coordinate scheduling through a second round of structured follow-ups. Once the Service Order is active, the provider’s view shifts to a Job while the resident’s view shows Service in Progress. Again, this is architectural, not cosmetic: in the Job phase, the provider’s agent is restricted by Phase-Specific Boundaries to logistics and scheduling. It is structurally prevented from renegotiating pricing or hallucinating new terms once the Service Order is active. The resident is never hit with unexpected changes or confusing AI dialogue after they’ve said yes.

Every step is logged. Every agent action is bounded. The human only intervenes at the decision points that require their judgment — accepting a proposal, confirming a schedule — not at every clarifying question along the way. And when both agents are engaged and operating within their constraints, the compression is dramatic: nine days of waiting resolved into a single day of agent-driven coordination the moment both sides were active. That is not a faster chatbot. That is representation.

This is what representation looks like in practice: agents doing the information work so humans can do the decision work.

Nine Foundational System Constraints

Before writing a single prompt, we defined the behavioral envelope that every agent interaction must operate within:

Actor-Validated Transitions. Every state change is validated not just by what is happening, but who is acting. A Provider cannot accept a Proposal - only a Homeowner can. A System cannot submit a Quote - only a Provider can. These are not application-layer permission checks. They are enforced at the state machine level, before any business logic runs. Even if upstream validation fails, the transition is rejected.

| Transition | Provider | Homeowner | System |

|---|---|---|---|

| Submit Proposal | ✓ | — | — |

| Accept Proposal | — | ✓ | — |

| Decline Proposal | — | ✓ | — |

| Withdraw Engagement | — | — | ✓ |

Immutable Negotiation State. Every agent handshake is recorded as an append-only, auditable log. No state can be silently overwritten. This is the foundation of any future dispute resolution.

Policy Snapshots. When a Quote becomes a Service Order, the system captures the exact policy in effect at that moment - user preferences, authorization thresholds, trade compliance rules. Six months later, when a homeowner asks why the agent accepted a $750 proposal, the answer isn’t “we think so.” It’s “your auto-accept threshold was $800 at that time. Here’s the snapshot. You changed it to $500 on this date.”

Immutable Compliance Layer. Not all policies are equal. Trade requirements sit above user preferences in a strict hierarchy and cannot be overridden by anyone - not by users, not by bugs. A homeowner who sets “auto-accept all proposals” still cannot bypass the requirement for a licensed plumber on gas work. Safety is structural, not behavioral.

Deterministic Failure Modes. Agents don’t guess when they lack information. They emit typed errors - INSUFFICIENT_CONTEXT, SCOPE_AMBIGUOUS, AUTHORIZATION_EXCEEDED - so the system can respond predictably to every failure case. Natural language ambiguity is the enemy of reliable systems.

Phase-Specific Agent Boundaries. Agents don’t operate with a single system prompt across all contexts. Each phase of the engagement defines what the agent can and cannot discuss. In PROPOSAL_PENDING, the agent can clarify the issue but cannot discuss pricing - no proposal exists. In PROPOSAL_ACCEPTED, the agent can coordinate scheduling but cannot renegotiate terms. This prevents entire categories of hallucination by design: the agent doesn’t drift into topics that are contextually impossible, because it has no guidance to go there.

Monotonic Progress. Every agent interaction must either reduce uncertainty or terminate. An agent cannot loop, hedge, or stall indefinitely. If the state isn’t moving toward resolution, the session ends.

Context Expiry. Sessions are time-bounded. Context does not persist beyond its authorization window. An agent cannot act on stale information.

Risk-Tiered Autonomy. Not all decisions carry the same risk. Agents have graduated authority — they can act freely on low-stakes decisions, must seek confirmation on medium-stakes ones, and cannot act autonomously on high-stakes commitments without explicit authorization. As shown in the service order above, scheduling confirmation is always returned to the human — the agent proposes, but the homeowner confirms. The system knows exactly where agent authority ends and human judgment begins.

Example of a Human-in-the-Loop Gate. While agents handle the high-velocity coordination of availability, the final commitment is surfaced as a deterministic choice for the user, preventing unauthorized scheduling.

The Agent Service Bus: Built Without a Framework

One of the most consequential architectural decisions we made was building our own routing and dispatch layer rather than adopting an existing orchestration framework. Most developers think of agent-to-agent communication as peer-to-peer chat - two agents with a shared channel. That mental model is exactly wrong for an economic system.

What we built is better described as an Agent Service Bus: a centralized dispatcher that owns the communication layer entirely. Every message between the Resident Agent and the Provider Agent passes through this router. No message ever travels directly between agents.

As shown in the Dr. Spanr phase of our engagement flow, agents operate in a half-duplex mode - the router ensures that one agent’s output is validated against the Service Brief before the other agent is even notified. This isn’t a performance constraint; it’s a governance constraint. It means the system has a choke point where it can inspect, validate, and reject any message that violates the session’s schema before it becomes part of the negotiation record.

That schema validation is not optional and it is not handled by the LLM. If the Provider Agent sends a message that doesn’t conform to the Proposal schema - missing required fields, asserting an out-of-scope commitment, or referencing an expired session - the router rejects it before it reaches the Resident Agent. The rejection is a typed error, logged, and surfaced back to the provider’s system. The LLM is never trusted to self-police its own output.

This design unlocks capabilities no off-the-shelf framework provides:

Deterministic Orchestration. By applying proven distributed systems principles - schema contracts, typed failures, centralized state - we bring the rigor of a service mesh to the “wild west” of LLM interactions. The router enforces what agents are allowed to say, not just what they’re instructed to say.

Load-aware dispatch. The router directs traffic based on agent availability, preventing session bottlenecks when multiple homeowner/provider pairs are active simultaneously.

Session integrity. The dispatcher enforces that messages are only forwarded within a valid, active session scope. Expired sessions cannot receive new messages - this is enforced at the infrastructure layer, not the prompt layer.

Observability at the transport layer. Because every message passes through the router, we have a complete, real-time audit trail of every inter-agent communication - not just what agents decided, but every message that moved through the system, every schema rejection, every typed failure.

Failure isolation. If an agent fails mid-session, the dispatcher surfaces a typed failure rather than allowing the session to hang silently or produce an unlogged state transition.

We built this from scratch, without any existing agent framework, because adopting a framework would have meant inheriting its assumptions - and every existing framework assumes the LLM is the last line of defense. In our system, the LLM is never the last line of defense. The runtime is.

The Engine Behind the Words

One thing that distinguishes Spanr’s architecture is that our vocabulary is backed by engineering constraints. The terms we use - Service Request, Lead, Service Order, Job - aren’t UX labels. Each one maps to a distinct permission boundary, phase constraint, and audit obligation in our architecture.

| Term | Who Sees It | What It Enforces Architecturally |

|---|---|---|

| Service Request | Resident | Only a Resident can submit one. Triggers provider notification pipeline. |

| Lead | Provider | Only a Provider can accept one. Opens a negotiation session. |

| Proposal | Provider | Only a Provider can submit. Schema-validated by the router before delivery. |

| Service Order | System | Created on Homeowner acceptance. Policy Snapshot captured at this exact moment. |

| Job | Provider (post-acceptance) | Phase boundary active: agent restricted to logistics, cannot renegotiate terms. |

| Service in Progress | Resident (post-acceptance) | Phase boundary active: agent restricted to scheduling and status updates. |

This is what it means to say the architecture is the product. The LLM is a capable component - but the guarantees live in the state machine, the compliance layer, and the audit trail. Those guarantees don’t depend on a model following instructions. They hold because the runtime makes violations structurally impossible.

The Principle Behind the Architecture

Most agent frameworks are built around a question: How do we make agents more capable?

That is a good question. It is not the question we needed to answer.

Our question was: How do we make agents accountable?

Capability without accountability is a liability in any economic system. An agent that can negotiate on your behalf but cannot be audited, cannot be bounded, and cannot fail gracefully is not an asset - it’s a risk.

The infrastructure of representation - the rails that make autonomous agent-to-agent interactions safe enough to deploy in the real world - doesn’t exist yet as an off-the-shelf product. The industry is still early. Frameworks are maturing. Standards are forming.

But the gap is real. And for anyone building systems where agents make commitments on behalf of real people in high-stakes, economically consequential domains, the gap matters today.

What Comes Next

We are still early in a significant architectural shift. The move from AI-as-assistant to AI-as-representative will accelerate across industries - property management, logistics, procurement, insurance, and beyond. Every domain where information asymmetry creates friction is a candidate.

The teams that will build durable systems in this space are not the ones that move fastest to deploy capable agents. They are the ones that invest in the governance layer first - immutable state, bounded authority, deterministic failure, and full auditability.

Capability is the raw material. Accountability is the finished infrastructure.

At Spanr, we aren’t just making agents smart. We are making them trustworthy. The LLM is a component. The architecture is the product. Because once agents start representing real people in real transactions, someone has to build the rails - and the rails have to hold.

Spanr is building The Property Intelligence Platform — the operating infrastructure for agent-to-agent representation in home services.